Artificial Neural Networks

Neural Networks

The nervous system is a system that provides a response to information received from the environment.

We see a neuron above.

For example, when we want to hold the pen at the table with our hands and lift it, we touch it and squeeze it enough. If we squeeze too much, the pen can be damaged or if we do not squeeze enough, this time we can not remove the pen, sliding from our hands. That’s where our neural networks work.

First, the knowledge of how boring the pencil goes to our neural networks and until a certain value(the pressure applied by the pen to our hands) we increase the strength we apply to the pen.

For example, we don’t feel the air in our hands when we’re sitting in our room, but when we pull our hands out of the glass of a moving car, we can feel the air(wind). In this example, we feel the pressure applied by the air in our hands because the pressure has reached the desired value or more.

Note: the minimum pressure required for us to feel in this example varies from person to person.

In another example, our nervous system allows us to see.

Artificial Neural Networks (Artificial Neural Network)

Artificial neural networks(HSA) is a method of learning a machine. The purpose of ysa is to create machines that can decide and interpret by mimicking the human nervous system.

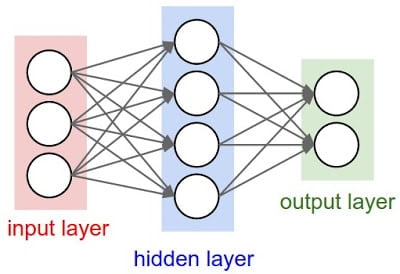

The following chart shows an artificial neural network:

As you can see, the artificial neural network consists of three layers.

Note: the input layer does not perform any calculations. The Data Link Layer provides reliable transit of data across the network.

As you can see, cells are connected to each other, these links represent synapses in our nervous system. These links will continue on the subject weight(weight) will say.

Now let’s look at all the details of how a calculation is taking place in neural networks.

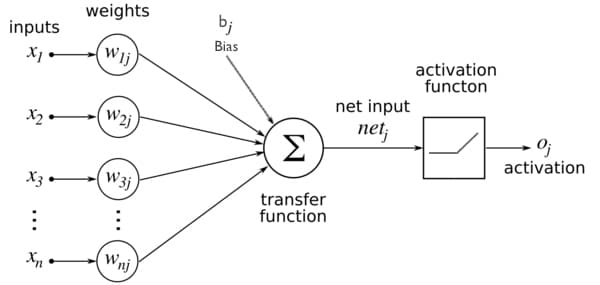

The following chart shows an artificial neural cell:

As you can see, our cell contains n data(entries are represented by X). Then the data entered is multiplied by the weights. Then all the data is collected and the bias value is added, so we get the net input. The net input is passed through our activation function and we get an output(outputs are shown with y).

Activation functions

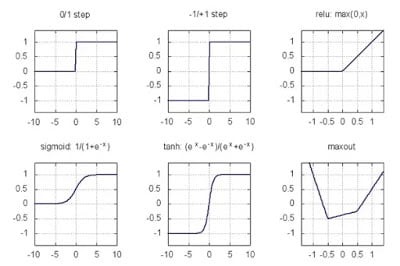

You can see many activation functions on the internet. The most popular of these is the relu function.

If the input is less than 0 or 0, Then the input is returned if it is not returned.

You can see popular activation functions in the chart below:

Training In Neural Networks

Training in neural networks is provided by updating the weights and bias values mentioned above.

The goal is to achieve the best weights and bias values.

In this paper, we will discuss how to find the optimal weights for a neural network and how to find the optimal weights for a neural network.

Important points in education:

– Education continues until it reaches the best result(the desired result, the test accuracy ratio is highest). It is important to put a limit on education considering that the desired result may not be reached at all.

– The fact that the data set is sufficient is very important for performance.

Epoch:

The entire set of data is called an epoch(updating of weights) to go and go once throughout the neural networks.

Increasing epoch may not yield better results, but conversely, it may cause it to start memorizing educational data, and the results of a new data(non-educational data) may not be in the desired performance. The lack of epoch may not deliver the desired performance.

Batch:

In an epoch, an array of data within the data set goes to the end of neural networks, then waits there until the data reaches the end of the batch size, then the error rate is calculated and optimization is performed(weights are updated).

The following animation shows a sample neural network tutorial:

I hope it’s useful.

I await your questions, your requests and your suggestions.